Kubernetes, the open-source container orchestration system, was created for automating software deployment, scaling, and management. According to a recent independent study from Red Hat, 70% of IT leaders reported that their organization currently uses Kubernetes and, according to a report from CNCF, 96% of companies are considering implementing the technology. This number is expected to rise as CNCF estimates that by 2023 the majority of applications will use cloud-native technologies.

While it has been very useful for developers, Kubernetes has become an operational challenge for effective cost optimization. The very features that make the system so appealing, its scalability and autonomy, can often lead to outrageous expenses if left unchecked or under-monitored.

In this article, we explore why it is so difficult to achieve the Kubernetes cost-performance sweet spot and some best practices for optimizing your Kubernetes infrastructure.

- Achieving the Kubernetes Cost-Performance Sweet Spot

- Continuous, Autonomous Kubernetes Optimization

- How Does Continuous Pod Rightsizing Work?

- Cost Optimization Via Application Performance Optimization

Achieving the Kubernetes Cost-Performance Sweet Spot

DevOps teams should strive towards the Kubernetes Cost-Performance Sweet Spot, the perfect combination of cost and performance, in order to get the most out of their environment. The issue is that there is always a tradeoff between cost and performance.

There are four primary challenges that explain why it’s not so simple to find that perfect cost-performance balance:

Challenge #1: A Constantly Changing Environment

The difficult task of finding the sweet spot becomes even more difficult when you realize that the environment is constantly changing. It becomes like trying to shoot an arrow at a target that is moving up and down, left and right, as well as forwards and backwards.

The following factors make for a dynamic and constantly changing environment:

- Peaks and valleys of usage, whether they are related to events (eg – Black Friday, Super Bowl), seasonal (eg – Summer break, Christmas season), unpredictable occurrences (eg – Covid, natural disasters) or day of the week/time of day.

- Application changes like software updates to combat vulnerabilities or to add new features.

- Infrastructure changes to resolve pod failures and hot swaps.

With so many variables that can change the required resources in an unpredictable manner, achieving the cost-performance sweet spot manually becomes untenable.

Challenge #2: Scaling From Single Deployment to Multi-Cluster

In order to prioritize your cost optimization activities, you need to have a multi-cluster view. This allows you to prioritize according to cost reduction impact. In other words, with full visibility of the multi-cluster, your DevOps team can spend time optimizing the deployments that will actually improve your bottom line.

Challenge #3: Existing Tools Fail to Combat Overspend or Compliment HPA

While there are tools available to monitor and improve Kubernetes systems, they overwhelmingly overlook cost issues. With K8s in particular, overspending can become unwieldy with even the most effective solutions.

These existing tools fail to address the factors that cause overspend, like autoscaling inefficiencies with HPA, applicable solutions for over-provisioning and compensating for the dynamic loads, application updates and infrastructure instability that are part and parcel of Kubernetes environments.

Challenge #4: Siloed Teams Conflict With Each Other

Different teams that are required to run a Kubernetes infrastructure are often separated in terms of both communication and KPIs. Application engineers are responsible for setting resource requests and they prioritize performance, meeting SLAs and ensuring stability at all costs. Meanwhile, DevOps and FinOps teams are tasked with overseeing the budget.

It’s understandable why these different teams, with different priorities might conflict with each other, especially in enterprises where communication between departments might not be optimal.

Continuous, Autonomous Kubernetes Optimization

This is where Granulate’s Kubernetes capacity optimization tool comes into play.

Overcome the above cost optimization challenges by utilizing autonomous rightsizing for pod CPU and Memory with continuous auto-apply. The solution eliminates over-provisioning and reduces infrastructure costs while meeting guaranteed SLAs. Users are able to scale from a single Kubernetes deployment to a multi-cluster solution seamlessly, requiring a single command line deployment.

The continuous and autonomous nature of the solution assures that applications are stable and resources are optimized no matter what happens to the environment, whether it be a peak in usage, a software update or an infrastructure upgrade. By constantly targeting the cost-performance sweet spot, spending is reduced by up to 63%. With SLAs confidently met, performance at optimal levels and a lower cost, both application engineers and DevOps/FinOps teams can rest assured that they’re reaching their KPIs.

Granulate’s capacity optimization also works hand in hand with HPA, effectively replacing VPA. Until now, Kubernetes users have generally been unable to utilize both HPA and VPA. By replacing the old-school VPA, they are now able to eliminate those pesky limitations and interact with HPA scaling strategies.

How Does Continuous Pod Rightsizing Work?

Deploying the secure agent is quite simple, requiring only a single command line. Once deployed, users are able to monitor workload and resources, such as CPU and Memory, via statistical sampling. They are then given rightsizing recommendations that, when applied, allow them to hit the cost-performance sweet spot.

These recommendations can either be applied manually or with auto-apply, with the click of a button.

Cost Optimization Via Application Performance Optimization

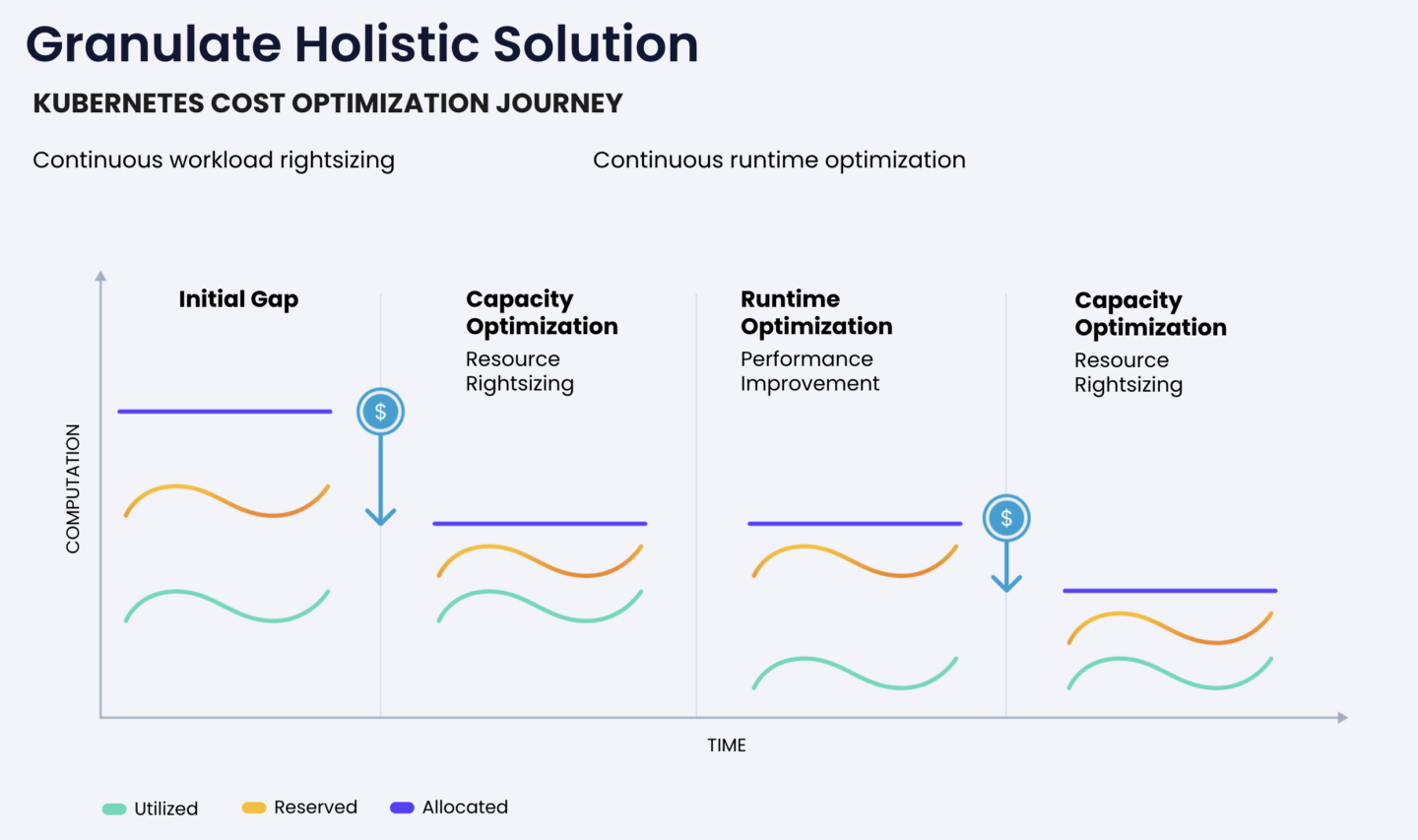

Besides the rightsizing recommendations, Granulate provides another method for cost optimization on Kubernetes workloads. By tapping into Granulate’s holistic solution for application performance optimization, cost reductions naturally follow.

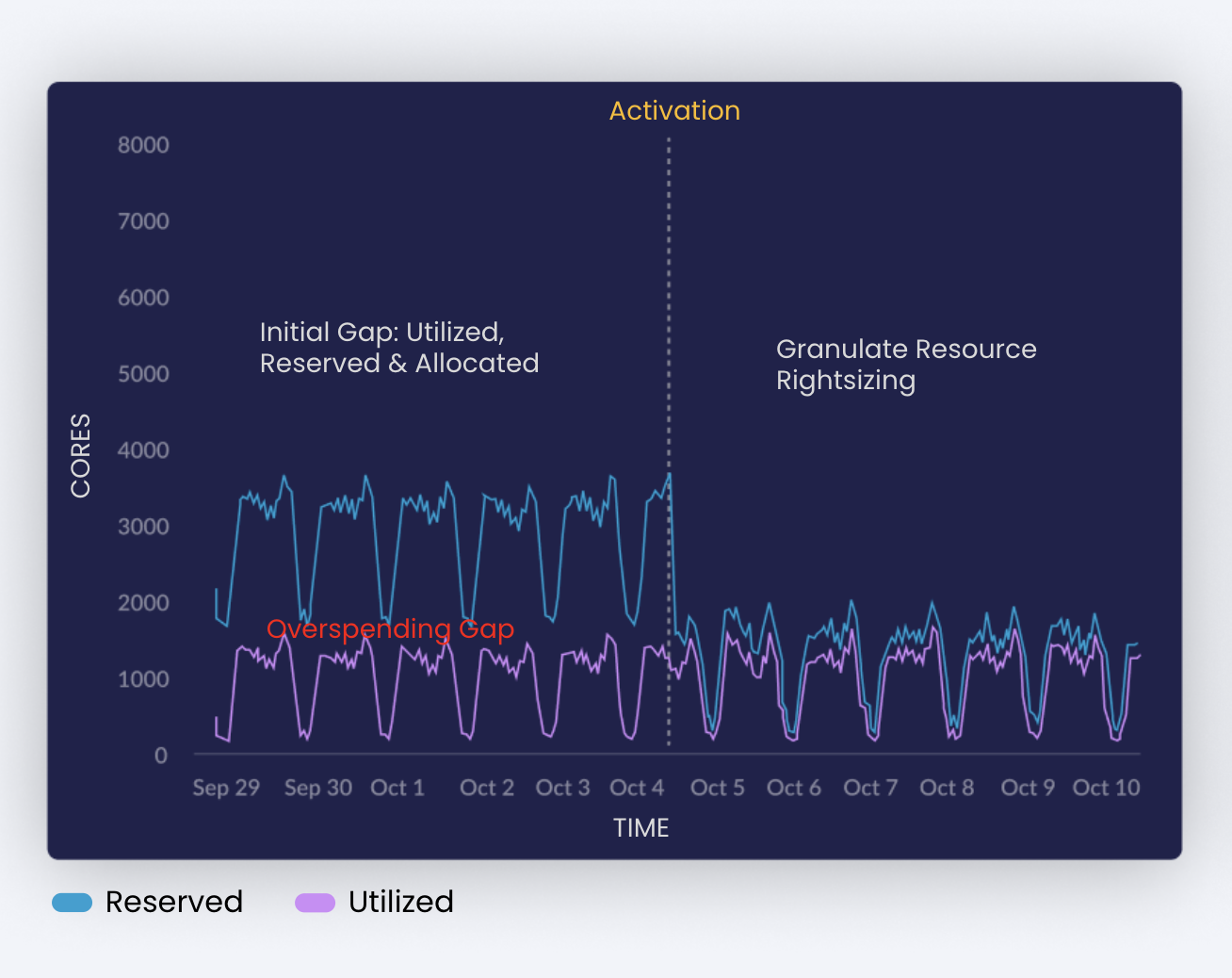

Granulate’s capacity optimization solution is ideal for companies that use Kubernetes. While the new tool allows them to minimize the gap between utilized and reserved resources, Granulate optimizes the resource utilization, providing another level of cost reduction.