This is the final piece in our series on optimizing AI and the CPU-based applications that enable the explosively popular new technologies to function successfully. To cap it off, we’ll dive into the world of real-time stream processing, a use case that is paramount to AI applications. If you haven’t yet, take some time to catch up on our previous discussions on:

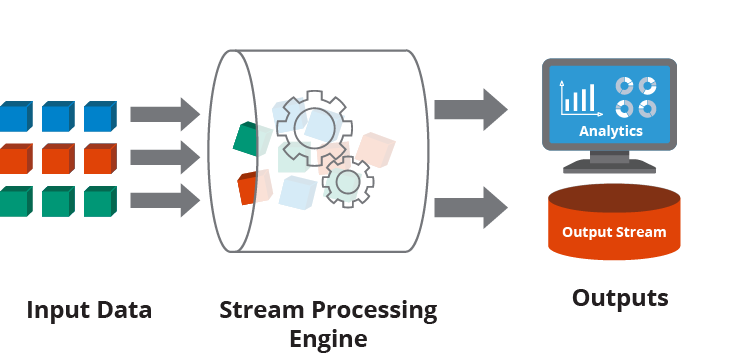

Stream Processing is a big data technology used for querying continuous data streams to quickly detect conditions within milliseconds to minutes after data reception. It is vital for scenarios where insights’ value diminishes rapidly over time, providing rapid responses, such as alerting when temperatures hit freezing points.

The technology supports agile decision-making, boosts customer service, strengthens cybersecurity, facilitates data democratization, and increases revenue by providing continuous, contextualized data access for immediate insights and actions. This technology is essential for organizations to remain competitive, responsive, and innovative in today’s fast-paced digital landscape.

Data streaming is pivotal for modern businesses, enabling real-time decision-making and enhancing customer experiences. 72% of modern businesses rely on data streaming to run critical systems and that trend is showing no signs of slowing down.

The Importance of Optimization for Stream Processing Applications

It all comes down to speed.

Fast stream processing is integral, whether you’re in the retail vertical and need a real-time picture of what a customer is experiencing in the real world to drive personalized recommendations or you have a cybersecurity product that requires quick identification of data anomalies, such as transaction fraud, IoT malfunction or shutdown.

Optimizing stream processing workloads increases speed primarily by enhancing the efficiency of data processing and reducing unnecessary overhead. When a stream processing system is optimized, it’s streamlined to process only relevant data, use resources more effectively, and minimize delays. Here’s a simplified breakdown:

- Reducing latency: Optimization techniques often focus on decreasing the time it takes for data to be ingested, processed, and output. This could involve refining the processing algorithms to be more efficient or restructuring the data flow to minimize wait times. As a result, data moves through the system faster.

- Increasing throughput: By optimizing the way data is processed, such as parallelizing tasks where possible or improving the allocation of computational resources, more data can be processed in the same amount of time. This boosts the system’s capacity to handle large volumes of data without slowing down.

- Resource utilization: Properly optimized systems make better use of available hardware and software resources, preventing bottlenecks. This means that the system can maintain high processing speeds even as the volume of data fluctuates.

In essence, optimizing stream processing workloads removes inefficiencies that slow down data processing, allowing for faster analysis and decision-making. For AI applications that rely on real-team stream processing, this increase in speed can be game changing.

When CPU is Better Than GPU for Real-Time Stream Processing

While GPU is generally the more popular hardware for AI applications, CPU can occasionally be preferable depending on the nature of the workload and the specific requirements of the stream processing task. Here are a few scenarios and characteristics of certain stream processing tasks where CPUs might be more suitable than GPUs:

- Task complexity and flexibility: CPUs are generally more flexible and efficient at handling complex logic and a wider range of instructions per cycle compared to GPUs. If a stream processing application involves complex decision-making or needs to execute a variety of different operations, a CPU might handle these tasks more efficiently.

- Latency sensitivity: CPUs can have lower latency in starting tasks and executing short-lived operations due to their architectural design. For real-time stream processing tasks where the utmost priority is minimizing latency, CPUs can sometimes offer an advantage, especially for workloads that aren’t highly parallelizable.

- Data dependency: Stream processing often involves operations where each data point might depend on the previous one (e.g., calculating running totals or applying window functions). CPUs can be better suited for these serial tasks with data dependencies, as GPUs excel in parallel processing where tasks can be executed independently.

- Resource overhead: GPUs require data to be transferred to their memory before processing can start, which can introduce additional latency. For real-time applications where every millisecond counts, this overhead can be a disadvantage. CPUs, with direct access to the main system memory, might incur less overhead in such cases.

- Infrastructure and cost: Usually CPUs are significantly cheaper than GPUs because they are in mass production, consume less power, and are essential to computer systems. Depending on the existing infrastructure and the scale of the operation, deploying CPUs for stream processing might be more cost-effective, especially if the workload doesn’t fully utilize the parallel processing capabilities of GPUs.

Summary of differences: CPU vs. GPU

| CPU | GPU | |

| Function | Generalized component that handles main processing functions of a server | Specialized component that excels at parallel computing |

| Processing | Designed for serial instruction processing | Designed for parallel instruction processing |

| Design | Fewer, more powerful cores | More cores than CPUs, but less powerful than CPU cores |

| Best suited for | General-purpose computing applications | High-performance computing applications |

It’s important to note that these points are not hard and fast rules. The suitability of CPUs or GPUs for real-time stream processing can vary widely based on the specific requirements of the task, the nature of the data, and the desired outcomes. In many cases, a hybrid approach that leverages the strengths of both CPUs and GPUs might be the most effective solution.

Intel Granulate Solutions for Real-Time Stream Processing

Big Data

Intel Granulate efficiently handles complex workloads across various execution engines, platforms, and resource orchestrations. Its dashboard offers comprehensive visibility into data workload performance, resource utilization, and costs, allowing for effective monitoring and adjustment of optimization activities.

The solution dynamically manages rapid scaling and fluctuating data characteristics, ensuring resource allocation is constantly updated for maximum efficiency, minimizing CPU and memory waste. This automation spares data engineering teams from manual adjustments, adapting to dynamic data pipelines, thus reducing compute costs and faster stream processing operations. Learn more here.

Databricks

Intel Granulate provides next level autonomous optimization to stream processing applications that run on Databricks. This optimization is enabled through a combination of enhanced dynamic autoscaling, intelligent resource allocation, and JVM runtime optimization. Users can complete more Databricks jobs faster by using Intel Granulate to continuously adapt resources and runtime environments to application workload patterns. Learn more here.

Runtime / JVM Optimization

For real-time stream processing applications, especially those dependent on Java Virtual Machines (JVM), runtime optimization can make a big difference. Intel Granulate fine-tunes JVM settings to ensure peak performance for these types of tasks to improve performance by 20-45%.

When combined with the Big Data and Databricks optimizations, this improvement on the runtime level drives even more value. Learn more here.

The Impact of Intel Granulate on CPU-Based Real-Time Stream Processing

Intel Granulate enhances real-time stream processing by optimizing CPU utilization, managing resources cost-effectively, improving scalability and elasticity, streamlining Spark operations, and significantly enhancing data processing speed.

For those developing AI applications that depend on real-time stream processing, Intel Granulate can accelerate data streaming and reduce processing time. With real-time, continuous orchestration, data engineering teams can complete more jobs in less time to increase throughput with optimized Databricks, EMR, Cloudera, Dataproc, and HDInsight.